Nvidia has halted production of H200 GPUs following Trump administration export controls targeting Chinese AI development, though the chipmaker is considering a permitting process to resume limited shipments. The ban directly impacts China's access to cutting-edge training hardware at a time when frontier model development depends critically on compute scale.

The restrictions fragment the global AI hardware market into security-aligned tiers. US companies maintaining Chinese operations or investment ties face new scrutiny—Anthropic received a supply chain risk designation from the administration despite securing $10B from Nvidia and resuming negotiations with the Pentagon after initially declining defense contracts.

OpenAI's robotics division lost its leadership over disputes about Pentagon deals, highlighting tensions between commercial AI labs and military partnerships. Separately, Oracle canceled a planned data center expansion with OpenAI, further constraining the infrastructure pipeline for large-scale model training.

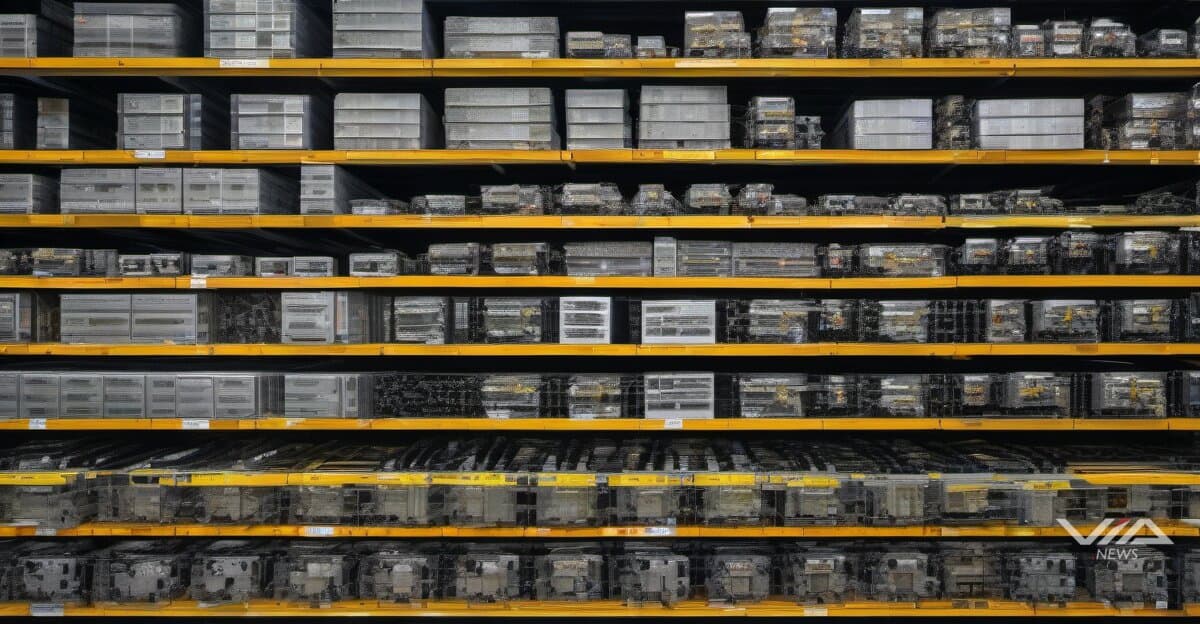

The H200 halt creates immediate training capacity constraints. Chinese AI labs had relied on Nvidia's high-end chips for parameter-heavy models, with the H200 offering 141GB HBM3e memory and 4.8TB/s bandwidth—specs required for trillion-parameter architectures. Without access, Chinese developers face a choice: scale back model ambitions, stockpile older hardware, or develop domestic alternatives that lag 2-3 generations behind.

US AI companies gain temporary competitive advantage in training speed and model size, but lose Chinese market revenue. Nvidia's data center segment, which generated $47.5B in fiscal 2024, faces permanent revenue reduction from China—previously a top-three market. The company's permitting exploration suggests willingness to navigate bureaucracy for partial market access.

The supply chain risk label attached to Anthropic signals that even US-based AI labs face compliance scrutiny based on funding sources and partnership choices. With Nvidia as both hardware supplier and investor, Anthropic exemplifies the entangled commercial relationships now subject to national security review.

This hardware access divergence will compound over training cycles. US labs using H200s and future Blackwell chips can train larger, more capable models faster, while Chinese teams optimize smaller architectures or wait for domestic chip alternatives. The gap in available compute translates directly to capability differences in reasoning, multimodal processing, and task performance—turning hardware policy into a lever for AI leadership.